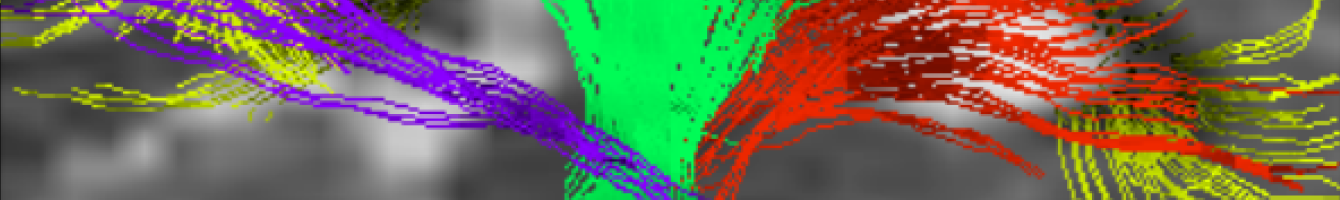

A step forward in understanding hydrocephalus

Hydrocephalus is a devastating structural neurological disorder marked by enlarged brain ventricles due to accumulation of cerebrospinal fluid. The current diagnosis and treatment of hydrocephalus is inadequate due to a lack of understanding about the mechanisms behind its development. Hydrocephalus may be accompanied by low intracranial pressure and it continues to remain a clinical challenge to differentiate this disease with […]

Read more